Add the search peers to the serverssetting under the Specify the peers as a set of comma-separated values (host names or IP addresses with management ports).#Edit nf To add the search peers to search head a) On the search head, create or edit a nf file in $SPLUNK_HOME/etc/system/local. You must run this command for each search peer on search head that you want to add. Splunk add search-server –auth admin:password –remoteUsername admin –remotePassword The remote credentials must be for an admin-level user on the search peer. Use the -remoteUsername and -remotePassword flags for the credentials for the search peer.Use the -auth flag to provide credentials for the search head.is the management port of the search peer.is the host name or IP address of the search peer’s host machine.Splunk add search-server ://: -auth : -remoteUsername -remotePassword If everything goes good, then you could see the list of search peers on your search head as below, # Using CLI Repeat for each of the search head’s search peers.

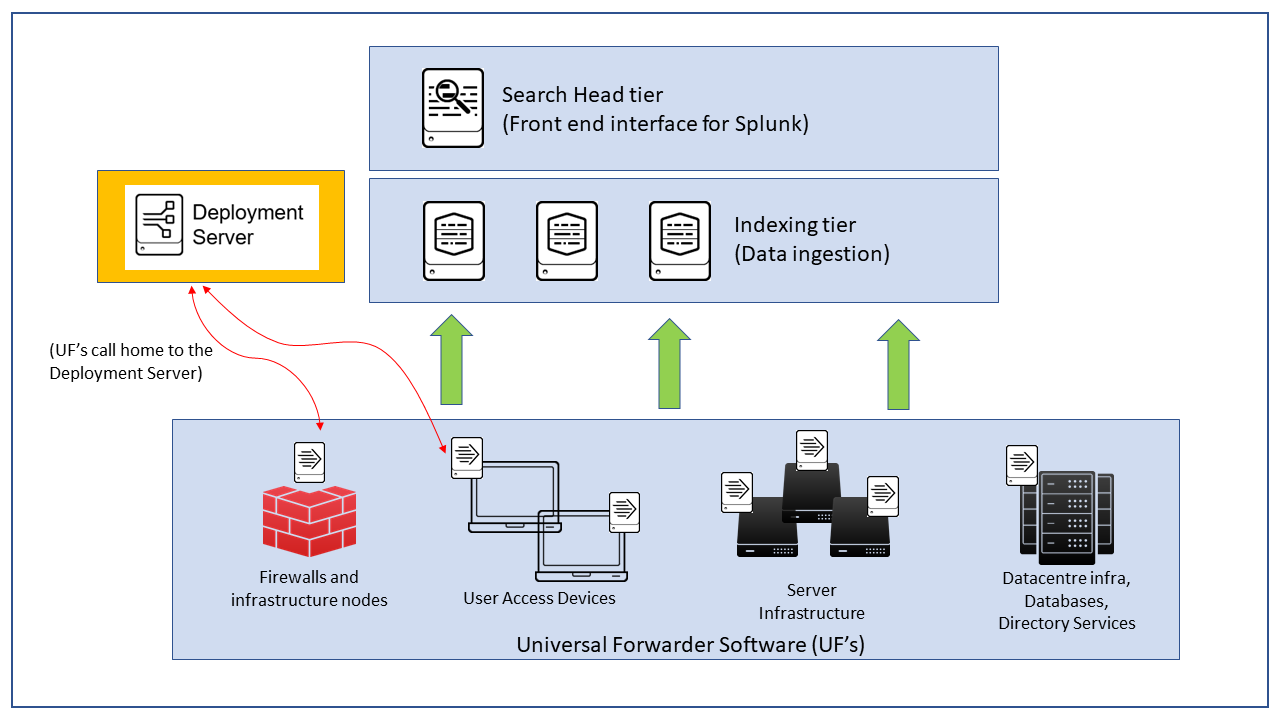

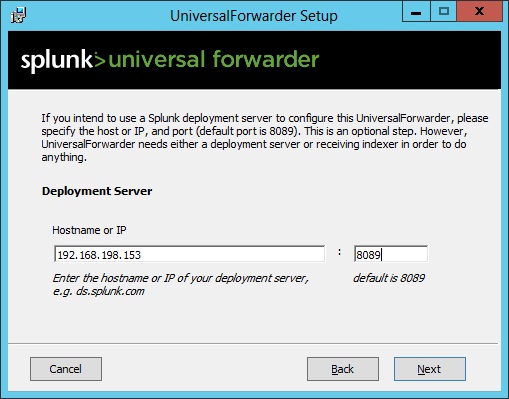

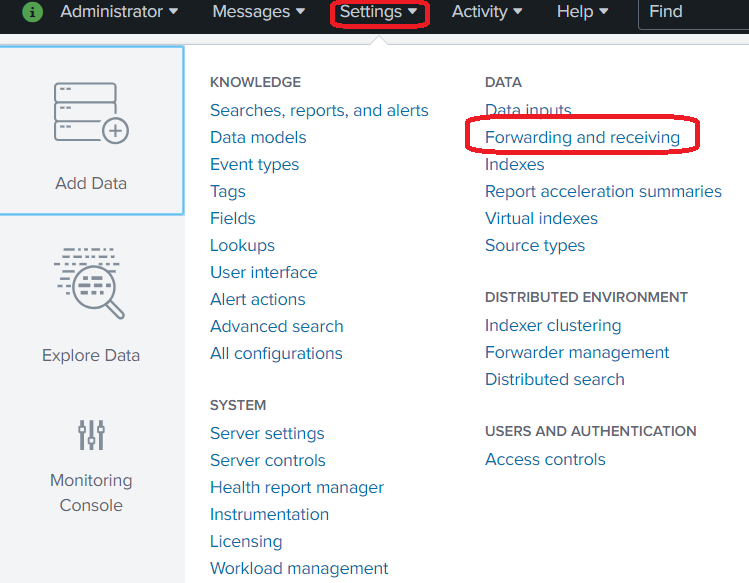

Note: You must precede the search peer’s host name or IP address with the URI scheme, either “http” or “https”. Specify the search peer, along with any authentication settings.Click Distributed searchin the Distributed Environment area.Log into Splunk Web on the search head and click Settings at the top of the page. There are 3 ways to add search peers to search head,ģ)Directly from configuration file ( nf) #Steps to add search peers to search head from WEB UI Once all these instances ready you need to add search peers or indexers to search head. 1 for search head and 3 more for indexers or search peers. Add connection_host = none in inputs.Distributed search provides a way to scale your deployment by separating the search management and presentation layer from the indexing and search retrieval layer.Ĭreate 4 splunk enterprise individual instances.Change ServerName in Splunk config files.In short, if you’re crazy enough to change the name of one of your indexers in a distributed Splunk environment, make sure you do the following: Simply restarting Splunk on the search head fixed it. Success! Something in the search head must have made it blind to the indexer once its name had changed. I finally thought to reload Splunk on the search head that had been talking to the server whose name I changed. It was creating a blind spot.Ĭonnections great, search status great, deployment status good. Everything looked good in the distributed search arena – status was OK on all indexers yet I still was not getting any results from the indexer whose name I had changed, even though it was receiving events! This was turning into a problem. My indexer was forwarding back to the forwarder everything it was getting from the forwarder, causing Splunk to shut down port 9997 on the offending indexer completely.Īfter getting all that set up I noticed Splunk was only returning searches from the indexers whose hostnames I had not changed. The blacklist had the old hostname, which meant when I changed the indexer’s hostname it no longer matched the blacklist and thus was deployed a forwarder’s configuration, causing a forwarding loop. That shouldn’t’ have been there! Digging into my deployment server I discovered that I had a server class with a blacklist, that is, it included all deployment clients except some that I had listed. I discovered this little gem of a stanza: /opt/splunk/etc/apps/APP_Forwarders/default/nf I was pulling my hair out trying to figure out what was happening. Finally I discovered this gem on Splunk Answers:Īre you using the deployment server in your environment? Is it possible your forwarders’ nf got deployed to your indexer? Meanwhile no events are getting stored on that indexer. Attempting to telnet from the forwarder to the indexer in question revealed the same results – works at first, then quit working. I edited $SPLUNK_HOME/etc/system/local/nf with the following: īut I also noticed that after I ran the command a short time later it was no longer listening on 9997. I found this answer indicating it could be an issue with DNS tripping up on that server. Revealed that the server was not even listening on 9997 like it should be. WARN TcpOutputFd - Connect to 10.0.0.10:9997 failed. I noticed right away Splunk complained of a few things: TcpOutputProc - Forwarding to indexer group default-autolb-group blocked for 300 seconds. Sed -i 's///g' $SPLUNK_HOME/etc/system/local/nf Modify /etc/system/network to make it persistent (CentOS specific).It can be pretty nasty! Below is my experience. Recently I set about to change the hostname on a Splunk indexer.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed